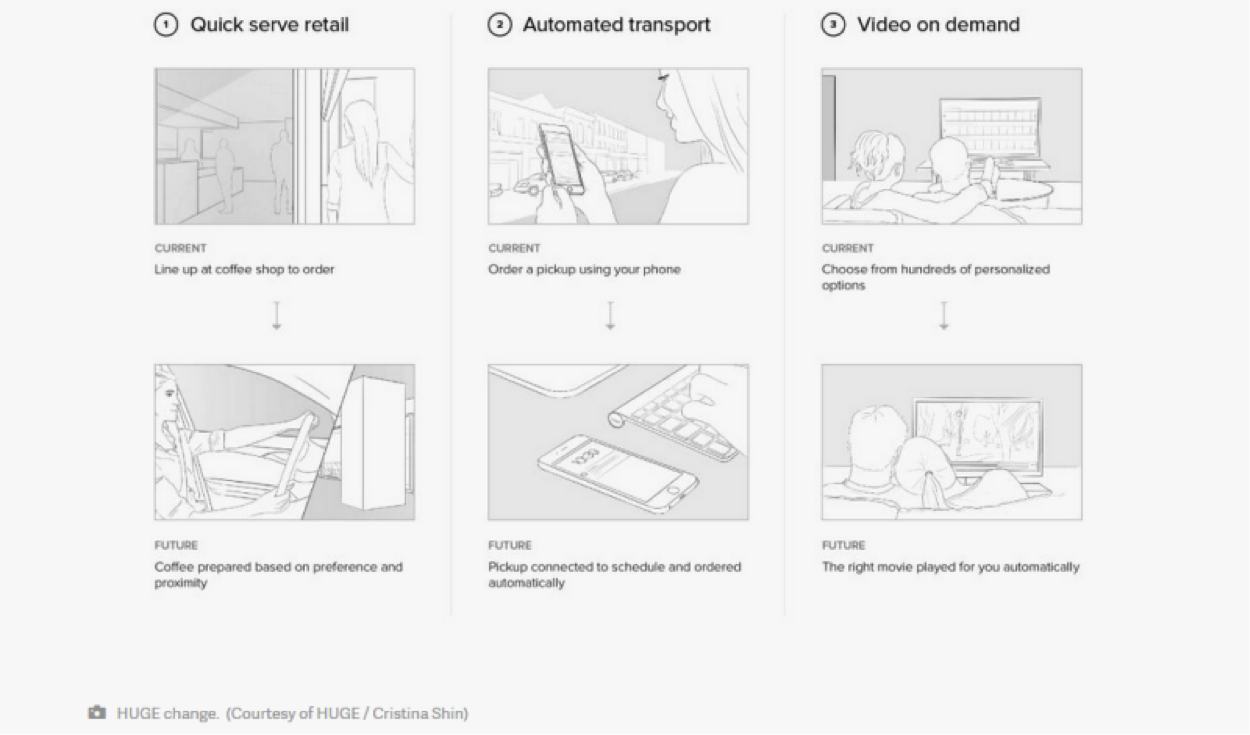

Anticipatory design is a term coined by the CEO of the Huge creative design agency, Aaron Shapiro, that connotes an elimination of the decision fatigue as the next ideal to be reached in web and product design. One example he cites, in his manifesto on the subject, is an application that automatically books a flight for you when a meeting that requires air travel is schedule on your calendar.

While there are some definite benefits to a system like this one, it’s not wholly without risk.

Anticipatory design is a brilliant innovation. If put into effect, it could simplify lives, alleviate stress, and streamline the details of difficult processes that users have to go through while visiting their favorite sites every day. To draw from another of Shapiro’s examples (one he’s actually putting into practice at his base of operations in Atlanta), imagine a coffee shop that produces a customer’s favorite order as they arrive by detecting their proximity via NFC technology.

This is certainly appealing enough. Nobody wants to wait in line.

Or think of an automatic airline booking which occurs when a frequent flyer consultant—imagine this consultant is a male for pronoun purposes—schedules a meeting with one of his cross-country clients. The airline emails him his boarding pass and he’s set to go. Convenience, simplicity, and serendipity. Such a streamlining technology could be potent indeed at minimizing the friction between what we want to do and actually accomplishing it.

However, the whole thing has a bit of an Orwellian feel to it. Such efficiency isn’t just useful, it’s kind of ominous.

The mantras that Anticipatory design advocates chant at monotonous intervals don’t exactly do it any favors:

- “Flow not friction”

- “Convenience not choice.”

- “Efficiency not freedom”

- “War is peace”

- “Freedom is slavery”

- “Ignorance is strength”

Okay, so I added a few 1984 quotes in there. Can you guess which is which?

This idea has already existed in less intrusive forms (like Amazon recommendations or YouTube suggestions), but bringing it into your waking life by allowing many of your more mundane decisions to be automated puts you in a uniquely vulnerable position. Moreover, it hinders your ability to customize your own experiences.

In this article, we’ll unwrap these issues and see if there isn’t some kind of balance that can be reached between the attractiveness of this innovation and the downright horror of volunteering your freedom in favor of convenience.

The Privacy Problem

Image Credit: Flikr

For such a system to work, users would have to give up control and any semblance of privacy to an automated system which would then make bespoke life decisions on their behalf. While this seems to be the direction that technology is headed anyway, is that what we really want?

Wearables, smart devices, home automation, and NFC technology have all made data collection ubiquitous, nigh universal even. You can’t interact with any interface without your actions being recorded, categorized and stored in a massive database located in a server in an undisclosed location. Additionally, these databases probably have government-sponsored backups at the giant NSA facility in Saratoga Springs, Utah. So if we can’t have disclosure, at least we can have assumptions.

The point is the vast majority of humanity walks around utterly vulnerable to exploitation based on precise, robust, and statistically infallible behavioral data that we’ve given up freely in favor of convenience in goods and services. It’s already the status quo. Big Brother is already in our living rooms, as it were. Still the oppression isn’t overt. Nothing so sinister. Our exploitation is one of marketing tactics. Cleverly served content which is designed to prey upon our recorded predispositions towards buying, browsing, and otherwise observable routines.

Before you dismiss such speculation as typical tin-foil hat conspiracy nonsense, consider that examples of data exploitation are already occurring:

- Health insurance providers basing rates on data collected from wearables

- Car insurance providers basing rates on data collected from sensors installed in vehicles

- Life insurance providers get in on the action too

- “Minority Report” styled predictive policing

- Employers monitoring employees to gauge productivity

California even passed a law to prevent employers from implanting microchips in their employees. Can we go ahead and make that federal?

Frighteningly, companies and corporations may be the least of our worries when it comes to malevolent usage of our personal data. After all, they still need us to buy something from them, so subtle coercion is still their main means of offense. Governments and hackers aren’t really held to the same constraints.

The fact is, when you opt-in for anticipatory design, big brands, governments, and any other party with the savvy to hack a server will gain far greater access to private behavioral data. Not just browsing behavior, mind you, but your favorite route to work, personal grooming habits, purchase histories, whether or not you’re pregnant—basically everything you do, and more often than not, what you might be thinking as well.

It’s not just metadata (data about data, which isn’t identifiable at the individual level) either. This is personalized, individualized, custom content serving that requires the innermost details of your private life to be on display for anyone with access to a particular network to see. Consequently, this represents a major opportunity for exploitation.

Worst of all, when you’re sacrificing your choices in favor of uninterrupted “flow,” the chances that you’ll notice when and how you’re being manipulated shrink considerably.

The real question is, do you trust the people handling your data? We’ve all basically decided to trust Google, though many of us are unaware of the extent to which our search results are manipulated, or our user data for the myriad G-applications are put toward finding ways to suck dollars out of our pockets, and definitely the degree to which the corporate giant plays favorites with content publishers, advertisers, and so on.

All this from a company whose unofficial motto is “Don’t be evil.”

Digit.co and the Circle of Trust

Let’s take an example of an Anticipatory design product that’s already in use, Digit.co. This digs right to the heart of the matter because what’s more personal than your finances? Digit is an anticipatory product that examines your buying behavior, budgets, spending, and other financial data. It uses this data to make intelligent choices about how much you should be saving every month. It automatically takes money from your checking and moves it to a savings account. It’s not unlike the plot to Superman 3 or Office Space.

The company is so confident in their process, that they offer to reimburse patrons for any money that they lose due to overdrafts from missing funds in their checking. Nothing so malevolent here at first glance. Your funds are held in an FDIC insured account, the site swears that your information will never be sold to a 3rd party, and the security specs on your personal data seem pretty solid. It’s got stellar reviews, and by all accounts it goes out of its way to make users comfortable with the service.

This is anticipation done right. They’ve done everything possible to be transparent in their business practices as well as to safeguard privacy and user data. All that’s left is to decide whether or not you trust the service. In this regard, I’d say the business has taken a cue or two from Ann Cavoukian, Canada’s Information and Privacy Commissioner.

The Privacy by Design approach that Ms. Cavoukian suggested is something every designer dealing with behavioral datasets should familiarize themselves with.

Unfortunately, providing the proper degree of privacy to anticipatory design users isn’t my only issue with this new idea. There’s also the suppression of the human spirit to be considered. When I hear “Efficiency not Freedom” and “Convenience not Choice” my jimmies are immediately rustled.

The Human Element

Image credit: Pixelbay

My main objection to Anticipatory design is an ideological one. This well-intended design approach consciously attempts to limit the need for choice and eccentricities in behavior. If Anticipatory design experiences widespread adoption, we’re talking about a drastic deterrent to experimentation on a societal level. That scares the hell right out of me.

I don’t want a society that caters to the lowest common denominator. Preferences and routines are one thing, but a society that’s uniform in its daily decision-making seems like it would be even less tolerant of changing circumstances. In a tech world that embraces change as an almost universal good, and likewise values the discarding of dross in outdated practices or techniques as a necessary form of maintenance, shouldn’t we be encouraging greater freedom of choice in how users interact with designs?

Decision fatigue is a real concern, granted. You want to limit the difficulties and friction that a user has to undergo in order to achieve a goal. But is it really healthy to eliminate so much of the work we have to do? In my mind, this creates a culture of laziness, rather than efficiency. People are always looking for shortcuts but in important undertakings, the mastery of a skill for example, there are no shortcuts. It’s practice, repetition, and determination that win the day. Having your banking, travel plans, coffee orders, and so on all automated can certainly provide short term benefits, but I think it behooves us to really consider what effect removing each routine will have in the long-term.

Will the removal of choice in so many mundane situations make us more effective at living or just more dependent on providers of products and services? Isn’t it better to design an open-ended interface that allows for experimentation and creation, rather than narrowing down the possible paths of navigation to that which is mostly routine to the user? If the anticipatory path is widely adopted isn’t that likely to create more uniformity than differentiation?

Effective Anticipatory design would have to recognize the wishes of certain users who want to be anal retentive. Many users are glad to be directed, they don’t want to worry over details and are only concerned about the end result.

Then there are the process people. Folks who are concerned about the way decisions are made and who want the option to adjust the parameters making each decision. The impulse with things like Anticipatory design and ubiquitous data collection, is to make processes, parameters, rulesets and all the like, more opaque.

Opacity has its place, but transparency has ascendance when it comes to the ways that brands are using private data.

Anticipatory Design and UX

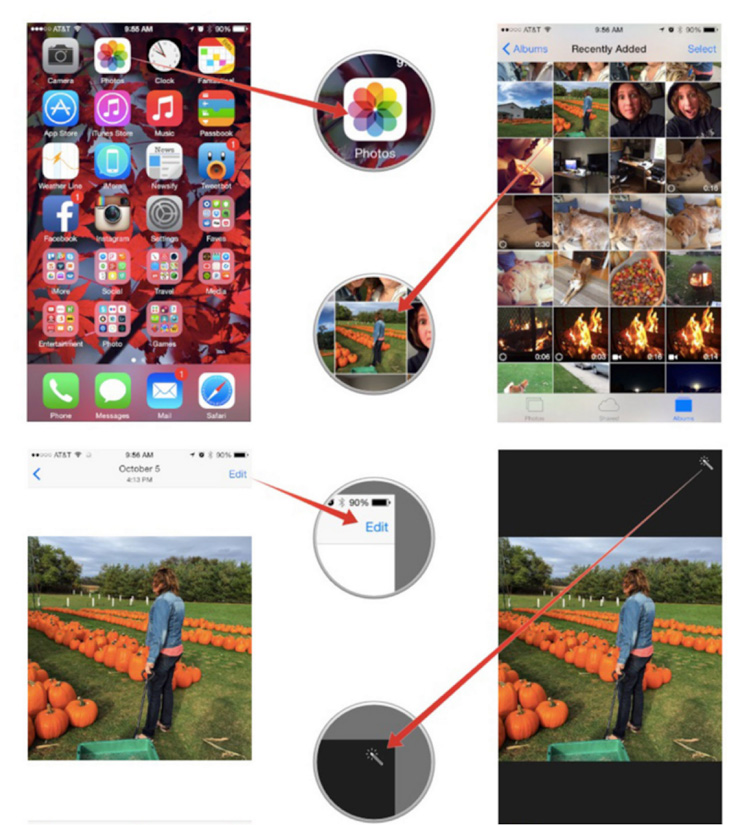

When designing systems that personalize content experiences through behavior-driven, anticipatory processes, it will be very important to allow users the option to adjust the level of data that is accessed as well as the way in which the content is served. The auto-enhance feature in iOS photos (pictured above) makes no such allowances for you.

You have to turn off the enhance feature if you’d like to take a turn at tweaking your own photography. This is a hobby that many an amateur photog would find engaging and gratifying, but in an anticipatory framework, they’d be denied such an opportunity.

To further exemplify, what if you don’t want the same type of coffee every time you pull up to the coffee shop? Certainly a regular order is regular for a reason, but what about the many cases when you’re in the mood for something different? Sometimes I feel like fresh roasted beans from the hills of Columbia. And other times I’m in a tropical island Kona kind of mood. Where’s the allowance for anticipation in my quirks? The addition of an optional touch point to the store in which you can change your “regular” order for the day, would address this quite nicely.

And that’s the kind of thing I’m advocating for here. If anticipation requires the elimination of all choice, then I’m opting out. However, if you can make something automated, while still retaining my ability to adjust or enhance my own experience, based on what I tell the machine, rather than what it observes about me, then I’m back on board.

Of course, this is only provided the company in question has provided me with a UX that I feel I can trust.

Conclusion:

Anticipatory design has enormous potential for simplifying our lives and eliminating decision fatigue. However, like most of the other paradigm shifting technology out there, the consequences must be carefully weighed in regards to its implementation. It’s not enough to automate choices. You have to be honest about how these choices are automated. You need to explain to users:

- Where the data comes from

- How it’s examined

- Who has access to it

- And what’s being done with it

Moreover, you have to allow experimentation within set constraints. Anticipate, but don’t exclude completely. If a user wants choice, give them choice. Digit does this by allowing users to adjust its saving aggressiveness. In the coffee shop example, I mentioned an extra touchpoint for adjusting orders would be a welcome addition. These kind of in-built options can be limited and serve as tangential usages for users who may or may not want to have their entire routine automated.

The idea here is to avoid automating humanity the point where they are actual automatons. Allow room for creativity and experimentation. And above all, be transparent about your business practices when it comes to sensitive data collection.

I’m certain I’ve missed a few ways to make Anticipatory design a bit more palatable. Feel free to add anything you think might be helpful.